|

|

| ActiveWin: Reviews | Active Network | New Reviews | Old Reviews | Interviews |Mailing List | Forums |

|

|

|

|

|

DirectX |

|

ActiveMac |

|

Downloads |

|

Forums |

|

Interviews |

|

News |

|

MS Games & Hardware |

|

Reviews |

|

Support Center |

|

Windows 2000 |

|

Windows Me |

|

Windows Server 2003 |

|

Windows Vista |

|

Windows XP |

|

|

|

|

|

|

|

News Centers |

|

Windows/Microsoft |

|

DVD |

|

Apple/Mac |

|

Xbox |

|

News Search |

|

|

|

|

|

|

|

ActiveXBox |

|

Xbox News |

|

Box Shots |

|

Inside The Xbox |

|

Released Titles |

|

Announced Titles |

|

Screenshots/Videos |

|

History Of The Xbox |

|

Links |

|

Forum |

|

FAQ |

|

|

|

|

|

|

|

Windows XP |

|

Introduction |

|

System Requirements |

|

Home Features |

|

Pro Features |

|

Upgrade Checklists |

|

History |

|

FAQ |

|

Links |

|

TopTechTips |

|

|

|

|

|

|

|

FAQ's |

|

Windows Vista |

|

Windows 98/98 SE |

|

Windows 2000 |

|

Windows Me |

|

Windows Server 2002 |

|

Windows "Whistler" XP |

|

Windows CE |

|

Internet Explorer 6 |

|

Internet Explorer 5 |

|

Xbox |

|

Xbox 360 |

|

DirectX |

|

DVD's |

|

|

|

|

|

|

|

TopTechTips |

|

Registry Tips |

|

Windows 95/98 |

|

Windows 2000 |

|

Internet Explorer 5 |

|

Program Tips |

|

Easter Eggs |

|

Hardware |

|

DVD |

|

|

|

|

|

|

|

ActiveDVD |

|

DVD News |

|

DVD Forum |

|

Glossary |

|

Tips |

|

Articles |

|

Reviews |

|

News Archive |

|

Links |

|

Drivers |

|

|

|

|

|

|

|

Latest Reviews |

|

Xbox/Games |

|

Fallout 3 |

|

|

|

Applications |

|

Windows Server 2008 R2 |

|

Windows 7 |

|

|

|

Hardware |

|

iPod Touch 32GB |

|

|

|

|

|

|

|

Latest Interviews |

|

Steve Ballmer |

|

Jim Allchin |

|

|

|

|

|

|

|

Site News/Info |

|

About This Site |

|

Affiliates |

|

Contact Us |

|

Default Home Page |

|

Link To Us |

|

Links |

|

News Archive |

|

Site Search |

|

Awards |

|

|

|

|

|

|

|

Credits |

|

Product:

3D Prophet III |

HRAA

|

Table Of Contents |

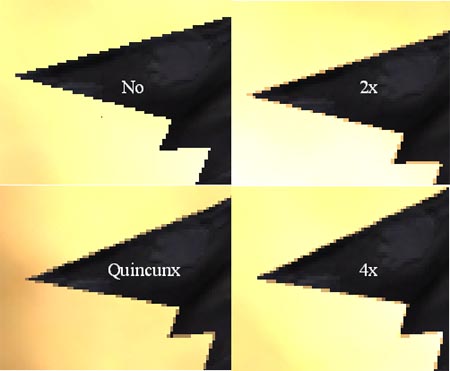

HRAA stands for High Resolution Anti-Aliasing. The HRAA technology was introduced first by the late 3DFX with the Voodoo 5500: over the past two years this technology took an important place. The purpose of HRAA is to remove aliasing effects like popping pixels or scaled shapes to make the 3D scene more realistic.

With the release of the GeForce 2, NVIDIA introduced a basic but efficient anti-aliasing method called supersampling that used different 2x or 4x levels (using a 2 or 4 times higher resolution). It works like this: when supersampling is enabled the GPU internally rendered the scene in a higher resolution than the one it should be displayed in, and then lowered the resolution by applying a filter before the scene can be finally displayed. The major drawback of this technology was the fact it really slows down the whole graphic display resulting in a poor FPS rate. NVIDIA’s answer to this problem is the new QuinCunx extension brought by the GeForce 3. Their new technique is supposed to give the visual result of a 4x anti-aliasing with the speed of a 2x one. To do so, QuinCunx (no, this is not slang!) interpolates pixels more efficiently than before: instead of using only two points of the scene to interpolate colors, it now combines adjacent pixels.

Below are the official performance rates announced by NVIDIA when using QuinCunx with Quake III Arena:

GeForce3,

2x : 85 fps

GeForce3, Quincunx AA : 72 fps

GeForce3, 4x : 51 fps

GeForce2 Ultra, 4x : 35 fps

Reading these numbers, you’ll instantly notice that the GeForce 3 is 50% faster than its predecessor even with a weaker fillrate. It proves the undeniable efficiency of the Light Speed Memory Architecture.

Since the GeForce 3 supports multi-sampling, it can perform all the DirectX 8.0 effects like the Motion Blur or the Depth of field. Notice that reflections effects are more realistic than ever when using the Soft-Reflections or Soft-Shadows especially when an object is reflected on a defined material. Contrary to the new features introduced by the nFinite FX engine, actual games can take advantage of the HRAA technology right now and don’t need to be adapted.

Transform & Lighting

As surprising as it can be, the Transform & Lighting engine actively promoted by NVIDIA is now nearly dead! If its successor called nFinite FX, is more than worth of that name, the GeForce 3 still includes a less elaborated transform and lighting engine than in previous generations to ensure compatibility with games designed for Direct X 7.0 and other ones like Quake III (the hardwired T&L also saves execution time if a game doesn't require the services of the Vertex Shader).

nFinite FX

The supreme new feature of the GeForce 3 is the brand new redesigned graphic engine called, in all modesty, nFinite FX. This new engine combines two modules in one: the Pixel Shaders and the Vertex Shaders in order to take full advantage of the latest DirectX 8.0 set of instructions. First you have to know what is a Vertex: a vertex is the corner of the triangle where two edges meet, and thus every triangle is composed of three vertices. A vertex usually carries several information, like its coordinates, weight, normal, color, texture coordinates, fog and point size data. A Shader is a small program that executes mathematical operations to alter data so a new vertex emerges with a different color, different textures, or a different position in space. Vertex Shaders are run by the GPU (so it doesn't consume CPU horsepower) to act on triangles’ top (vertices: it concerns every polygon shape) associated data for the Vertex Shaders or on the pixels for the Pixels Shaders. Now let's see the Pixel Shaders. If every 3D scene is composed of pixels generated by Pixels shaders, the Geforce 3 comes with 4 Pixel Shaders aimed to convert a set of texture coordinates (s, t, r, q) into a color (ARGB), using a shader program. Pixel shaders use floating point math, texture lookups and results of previous pixel shaders. A pixel shader can execute programmed texture address operations on up to four textures and then run eight freely programmed instructions of texture blending operations that combine the pixel's color information with the data derived from the up to four different textures. Then a combiner adds specular lighting & fog effects to make the pixel alpha-blended, defining its opacity. In comparison to GeForce 2 NSR, the GeForce 3 pixel shaders are definitely more advanced. They fetch two texels per clock cycle, allowing up to four textures per pass. When a game attempts to apply more than two textures per pixel, the Geforce’s pixel shader require 2 clock cycles for three or four textures but still perform this in only one pass against several for the GeForce 2. Finally Geforce 3 pixel shaders save valuable memory bandwidth: indeed when you attempt to render 3 or 4 textures per pixel the GeForce 3 requires only 1600MB/s while the GeForce 2 would consume 3200MB/s. Those pixel shaders are essential to bring movie-style game to your PC since they process a huge load of data. Until now no graphic processor was able to render such effects in real-time. With 27 new instructions for the Vertex shader, and 23 new instructions for the Pixels shader, games’ developers are freer than ever to express their creativity, realizing the craziest things their imagination suggests. In other words, the vertex shaders inject personality into characters and environments while the pixel shaders create ambiance with materials and surfaces that mimic reality. With such a technology, developers not only use pre-cabled instructions from NVIDIA but they also create and upload their own algorithms into the GPU bringing to life brand new graphic effects engine! Due to its flexibility, listing the effects that can be managed by the GeForce 3 is simply impossible, but here are some of the most famous things that are now supported: enhanced Matrix Palette Skinning, Keyframe Animation (used by 3D morphing), 3D objects can be distorted to simulate waves, wind, etc. The only limit developer will face is that a vertex shader can’t exceed 128 instructions.

Both of these modules open the door for highly realistic games that will definitely take your breath away, taking the visual experience to a new level especially when combined to the Environmental Bump Mapping. Remember! When Matrox introduced its G400 series of graphic card, a brand new and unique 3D feature called Environmental Bump Mapping also appeared. The GeForce 3 at last includes this technology to simulate relief in 3D scenes by playing with light effects. The Environmental Bump Mapping process is actually the most realistic technology as shown by one of the 3D Mark 2001 scenes. Games optimized for the GeForce 3 GPU will feature more fluid and more accurate graphics, with an unprecedented level of details, thanks to the technologies explained above.

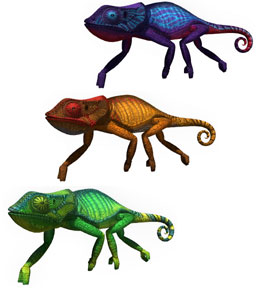

Above are shots from nVidia technological demos & games that are optimized for the GeForce 3 (click to enlarge)

Higher Order Surfaces

Behind this name lies a new technology designed to save AGP bus bandwidth. According to NVIDIA, AGP can limit the performance of its new GPU. They submitted the following example to highlight this potential problem: a scene that contains 100,000 triangles, each one composed of 3 vertices weighing approximately 50 bytes. It makes 14.3 MB of geometric information per image, so at 60 frames per seconds the whole scene weighs 858 MB that should pass through the AGP port. The problem is that the 4x AGP port can actually offer only, in best cases, a 1 GB/s transfer rate (Roll on AGP 8x!) so games can quickly become limited (even if actually few games use as many triangles!) in a few months. The workaround NVIDIA used to fix this potential problem comes once again from Microsoft and its Direct X 8.0 set of API (application programming interface). The GeForce 3 now supports the High Order Surfaces feature introduced by Direct X 8.0. HOS replaces heavy to transfer triangles by surfaces composed of different control points that are primarily much faster to transfer while being at the same time totally flexible thanks to mathematical functions. Using this function surfaces are now composed of curves saving part of the AGP bandwidth instead of engorging it with triangles. The advantage is obvious: drawing a simple sphere requires thousands of traditional triangles to be drawn while only 4 rectangular patches (and some functions) are needed when using HOS. Once this information is sent to the GeForce 3, it’s time for the GPU to achieve the tessellation to transform HOS into a more or less important number of polygons depending on the requested detail level. To enjoy this feature, games should obviously come with a redesigned 3D engine that supports it, which is not the case of most games today. Thus games’ developers will have to choose between the two HOS functions supported by Direct X 8.0: N-Patches or RT-Patches (rectangular/triangular), if RT-Patches are suppler and give a better lookout, N-Patches are more easy to use :). HOS paves the way for faster and more realistic games until AGP 8x arrives.